by Sarvesh Pimpalkar

Previously we discussed the importance that inertial measurement units (IMUs) play in localization for autonomous mobile robots (AMRs) in a Localization: The Key to Truly Autonomous Mobile Robots - element14 Community. Today we will elaborate on how navigation relies on a fusion of sensor technologies working together to allow AMRs true freedom within dynamically changing environments.

So how do mobile robots learn to get around? As kids we are all thought to “stop, look and listen” before crossing a road, but does this same concept apply to robots. As humans we rely on our eyes and ears to help us “navigate” our environment, robots on the other hand use sensors to provide an awareness of their surroundings.

AMRs use Simultaneous Localization and Mapping (SLAM) techniques to navigate. The process involves the AMR being driven around the facility and scanning its environment. These scans are combined and generate a complete map of the area. AMRs utilize an array of sensors and algorithms for localization and navigation. Sensor technology such as industrial vision time-of-flight cameras, radar and lidar are the “eyes” of an AMR, combined with data from IMUs and wheel odometry (position encoders).

However, no single sensor is perfect. The true power lies in the diverse sensor types working together to produce effortless navigation in dynamically changing environments.

Each sensor has strengths and weaknesses that are balanced out by having more than one sensor type being relied on for navigation purposes. Let’s consider how multiple sensors can enhance the overall AMR performance.

Environmental Factors while Navigating

Lidar sensors can be sensitive to various environmental factors, such as ambient light, dust, fog, and rain. These factors can degrade the quality of the sensor data and, in turn, affect the performance of the SLAM algorithm. Similarly, other sensor modalities can be affected by reflective surfaces, dynamic moving objects (other AMRs or workers) thus further confusing SLAM. The table below summarizes how environment affects different sensors modalities.

Table 1: Comparison table of sensor modalities

While IMUs and wheel odometry are not affected by visual elements within the working environment, the use of this sensor data in conjunction with visual data means the AMR can operate better in any scenario encountered. Let’s consider the challenge of navigating on a sloping floor surface.

Navigating on a Slope

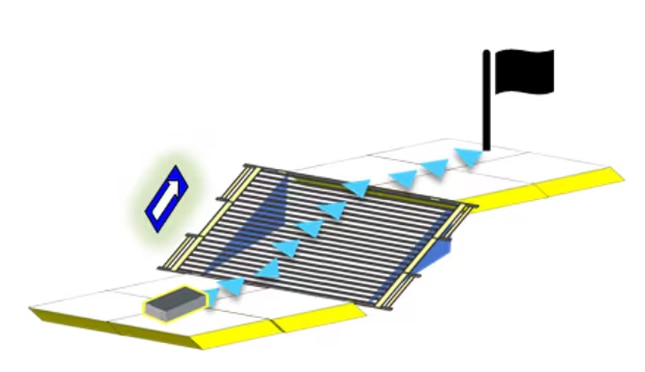

While maneuvering on a slope, traditional SLAM algorithms encounter challenges when relying on lidar, as the 2D point data does not show gradient information. Consequently, slopes are misconstrued as walls or obstacles, leading to higher cost maps. As a result, conventional SLAM approaches with 2D systems become ineffective on slopes. IMUs help to solve this challenge by extracting gradient information to effectively negotiate navigating on a slope.

How does the sensor data get combined?

In a typical ROS (Robot Operating System), vision sensors along with IMU and wheel odometry are combined through a process called sensor fusion. A widely used opensource ROS package is robot_locatlizaton1 which utilizes EKF (Extended Kalman filtering) algorithms at its core. By fusing data from diverse sensors such as lidar, cameras, IMUs, and wheel encoders, EKF helps in better estimating and understanding of the robot's state and its environment. Through recursive estimation, EKF refines the robot's position, orientation, and velocity while simultaneously creating and updating a comprehensive map of the surroundings. This fusion of sensor data enables mobile robots to overcome individual sensor limitations and navigate complex terrains with greater precision and reliability. By leveraging techniques like EKF help in collective insights of sensors, deriving meaningful sensor fusion of various sensor modalities allowing mobile robots to effectively perceive and interact with their environment and help navigate AMRs autonomously.

A future blog in this series will cover the Robot Operating System in more detail. However, the focus of this blog is to leave you confident that sensor fusion offers increased reliability, increases the quality of data, while providing greater safety for objects and people within the environment as AMRs aren’t relying on a single means to navigate. To learn more visit analog.com/mobile-robotics.

Reference / Resources:

1 https://docs.ros.org/en/melodic/api/robot_localization/html/index.html

ADTF3175 1 MegaPixel Time-of-Flight Module

ADTF3175BMLZ ANALOG DEVICES, Imaging Module, 1024 x 1024 Active Pixel, 3.5µm x 3.5µm | Farnell® Ireland

EVAL-ADTF3175 Time-of-Flight Evaluation Kit

EVAL-ADTF3175D-NXZ ANALOG DEVICES, Evaluation Board, ADTF3175, ADSD3100 | Farnell® Ireland