Table of Contents

Introduction

Sometimes, one wants to get started with a microcontroller project without a lot of setup overhead. If you’ve seen Pi Pico C or C++ projects on GitHub that you wish to work with, or if you wish to write your own Pi Pico projects but do not wish to go through the effort of setting up the development environment for the Pi Pico, then this blog post may be of use.

This blog post provides just one example approach; there are many others. The approach I've decided to write about involves Visual Studio Code (VS Code), and Docker Desktop.

Visual Studio Code provides the code editing functionality and a button to click on to build (i.e., compile) the code. You end up with a .uf2 suffix firmware binary file all ready for uploading into the Pi Pico (it is done via USB file drag-and-drop).

Docker Desktop is a piece of software that, once installed and run, does not need much interaction. Docker Desktop in the background provides a ‘container’ environment that stores the code and compiler. A container is a bit like a virtual machine.

For following this blog post, no previous knowledge is required about VS Code or Docker Desktop, and, as you’ll see, the learning curve will be pretty much restricted to just navigating VS Code graphical menus.

Software Installation

On your PC (I tested this on Windows, but it should work fine on Mac and Linux too, although some steps may differ very lightly) install two things; Docker Desktop, and (if you don’t already have it installed) Visual Studio Code.

Start up Docker Desktop (it must be running for the rest of the steps to work), and start up Visual Studio Code, and that’s where we will begin.

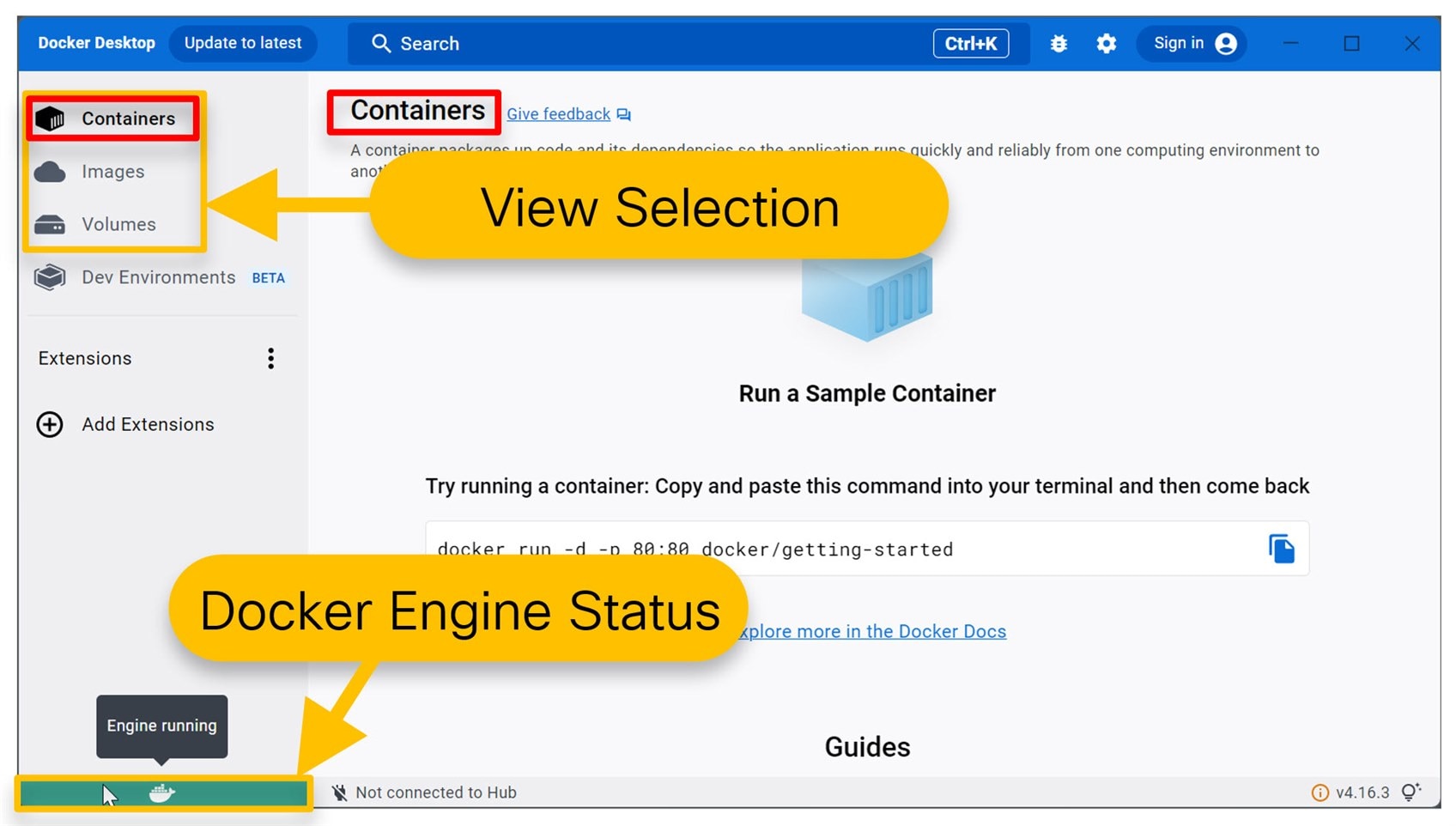

As mentioned in the introduction, you won’t need to interact much with Docker Desktop usually. It does its thing in the background; however, just in case you’re curious, the screenshot below shows some of the interesting features. The lower-left green block indicates that the Docker Engine is running (if it is stopped, it needs to be started!). The left side allows the user to select different views for the main pane. You’ll be mainly interested in container status; in the screenshot below, no container is running.

Configure Visual Studio Code

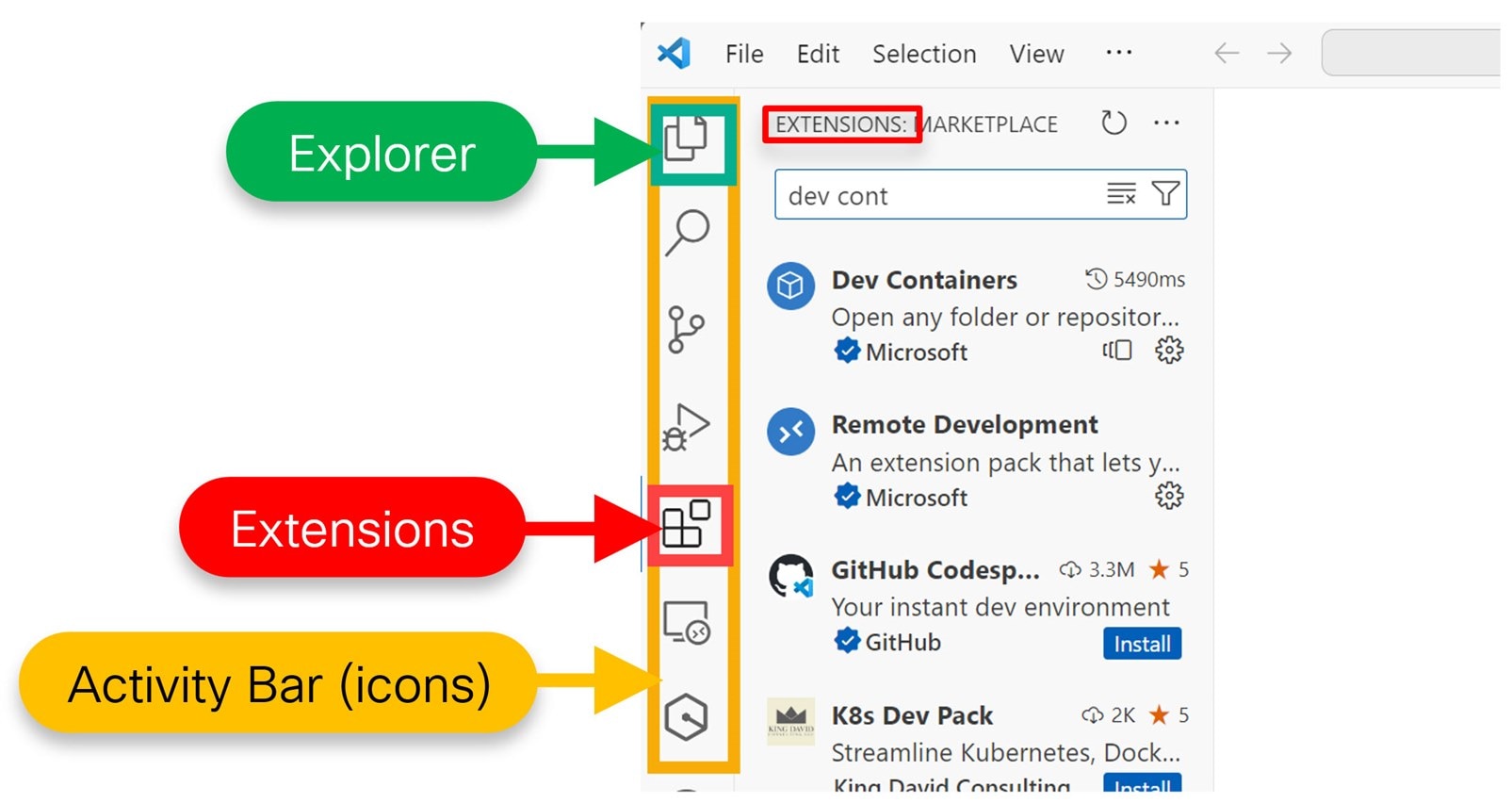

The left side of VS Code has a palette of icons known as the Activity Bar. Two of the most popular icons that will be used are the Extensions and Explorer views. Extensions are add-on features that can be installed, and the Explorer usually shows the files list in the project.

Click on the Extensions icon, which looks like blocks, and you’ll see a list of add-on features with a search box at the top. In that search box, type Dev Containers to find that extension and then click its Install button. Later, you’ll need to use the Extensions pane again, so make a mental note about how to navigate to it : )

Open a Dev Containers Project

The lower-left corner of VS Code (it will be colored blue or green) has a connection icon, and it is used to make remote connections. If you click there, a menu pops up. Click on Clone Repository in Container Volume.

In the box that appears, paste in the following URL and then press Enter:

https://github.com/shabaz123/project1.git

That URL is to a pre-prepared repository set up for a Pi Pico project inside a Development Container.

Now, VS Code will go off and prepare a container. It will take several minutes. Click on show log as shown in the screenshot below, to see what’s going on if you’re interested!

Once it is complete, you’ll see the project files (such as main.c) listed on the left side. Click on the Explorer icon on the left side if you can’t see the project files.

The screenshot below shows what everything should look like at this stage:

Out of interest, if you look at Docker Desktop, you’ll see that a container was created with a random name, such as stupefied_lama. If you wanted to, you could start or stop the container by clicking on the icon in the Actions column, or you could perform other actions (such as going into a command line shell inside the container) by clicking on the three-dots icon in the Actions column.

Although the project is now open in VS Code, one more thing needs to be done, and that is to enable a CMake Tools extension inside the container.

Click on Extensions on the left palette of icons as before, and type CMake Tools, and then install it as shown in the screenshot below.

After 30 seconds or so, it will be installed. Ignore any pop-ups referring to configuring the project. VS Code tends to produce a lot of pop-ups.

Once installed, you can close the informational page by clicking on the X at the top, and then go back to the Explorer view for the left pane, since that’s most useful when coding.

Building a Dev Containers Project

If you look at the bottom bar of the VS Code window, you’ll see text labeled Build. Click it : )

You may be prompted to select a configure preset in which case click on Custom configure preset.

If all goes well, you’ll see the messages Build completed and Build finished with exit code 0. The final built .uf2 file can be seen by expanding the out folder in the files pane.

Right-click on the .uf2 file, and click on Download, and save it to your PC.

Examining Project Code

This first project doesn’t do a lot; it just blinks the green LED on the Pi Pico at a rate of about two blinks per second.

Some of the code is in main.c, and some happens to be in a file called extrafunc.c. If you click on the function name led_setup, and then press F12, VS Code will open up the file that contains that function so you can easily follow the code.

Note that VS Code can do a lot more, although some of it will need to be configured, which is not in the scope of this blog post.

Revisiting the Project

If you’ve finished with your project, you can close it within Visual Studio Code, by clicking on the lower-left remote connections Dev Container blue box and in the list of options, scroll down and select Close Remote Connection.

If you come back to it later and wish to reconnect, first go into Docker Desktop, and, if it is stopped, then start the container using the triangular start button in the Action column for the listed containers. Then, click on the lower-left remote connection icon in VS Code, select Attach to Running Container, and then choose the container name from the list that will be presented. In the Explorer view, click on Open Folder, and type /workspaces/project1 and click on OK.

You’ll now be able to work with the project, and build the code, as before.

Running the Pi Pico project

You can transfer the .uf2 file to the Pi Pico, by holding down the BOOTSEL button on the unpowered Pi Pico, plugging in the USB connection to the PC, and a USB drive letter will appear. Drag the .uf2 file to the drive letter, and it will transfer and start running.

Opening Projects in a Container

If you want to, you can open up additional projects, since the previous one is minimal just for demo purposes.

Lets say you wish to open a project on GitHub, and it’s repo URL is:

https://github.com/shabaz123/sound_fx_mixer.git

In VS Code, provided you already have attached to the container (for instance, if you’ve been working with the earlier project or if you click on the lower-left Remote Connection icon and selected Attach to Running Container), close any open folder by going to File->Close Folder, and then in the Explorer pane on the left side, instead of clicking on Open Folder, click on Clone Repository.

In the popup that appears, type the repo URL. You will be prompted to choose a folder to clone the project into. Type /workspaces and press Enter. You should now see the project contents, and you can edit the code as usual.

Now go to View->Terminal->New Terminal.

You’ll see a command prompt with the path set to the project folder, which is /workspaces/sound_fx_mixer in this example.

Type the following (the dot at the end is important!):

cp -r ../project1/.vscode/ .cp ../project1/CMakePresets.json .

What the above two lines are doing, is copying some files from the earlier project1 folder, into the current project. This is needed, to allow the development container to successfully build the project.

For this specific project, a pico-extras repository also needs to be downloaded and configured. This wouldn’t be the case for many other projects.

Using the screenshot below as a reference, from the left side, select CMakePresets.json, and then add the path as shown below, i.e, append a comma to the PICO_SDK_PATH line, and then add a line:

"PICO_EXTRAS_PATH": "/workspaces/pico-extras"

Save the file.

In the terminal, go to the \workspaces folder (for instance by typing cd .. to go back one folder), and then type:

git clone https://github.com/raspberrypi/pico-extras.git

Now click where it says Build at the bottom of the VS Code window. You may be prompted to select a configure preset in which case simply click on Custom configure preset. After a minute or so, the code will be built!

Now you can download the .uf2 file from the container in the same way as before, by clicking on the out folder in the left side Explorer view, and then right-clicking on the .uf2 file there and select Download.

Summary

The Development Container technique allows for quickly developing Pi Pico projects without needing to set up the local PC with an SDK and toolchain. This blog post discussed how to create a container based on content from GitHub, and then use that container for obtaining additional projects from the Internet, and building the code into the .uf2 file which can be uploaded into the Pi Pico for execution.

Everything discussed in this blog post is quite flexible, and if you come up with changes to the workflow that better suit your needs, please share them, in case they help others too.

Thanks for reading!